May 21, 2024

Clean Up Your 404 Errors

By Megalytic Staff - June 01, 2018

Why

Why should organizations take 404 errors seriously enough to plan clean-up initiatives around them? The main reasons would include user experience, search engine visibility, crawling activity and data integrity.

User experience: First and foremost, 404s can be dead ends for users and can lead to site abandonment. Users leaving as a result of encountering 404 errors can increase bounces and exit rates. They can also lead to lost sales and customers.

Data: Even if a user is patient and works around the 404 error, that extra time spent clicking around will skew the time spent on site and the page depth metrics for that session. Sites that have major 404 issues might see misleading aggregate engagement metrics as a result of 404 related user behaviors. If custom 404s are not set up properly as well, a session that includes a 404 error might be interpreted by Google Analytics as having ended. Then, when the user returns to the site, another session might begin. For these reasons, 404s can undermine data integrity and the ability to make accurate assessments of user behaviors.

Search Visibility: A substantial volume of broken internal links can disturb and influence how link equity “flows” throughout a website. That’s unfortunate. But external links to a page that returns a 404? That’s a crying shame, because that link cannot be leveraged for referrals or link equity. If there are a large number of broken backlinks, we have two words for you, my friend: link reclamation !

Crawling: Googlebot will continue to crawl and “look” for 404 pages unless they are actively 410 ’d, redirected, or links to these pages are removed. Even when the link in question is removed, once Googlebot has a record of a URL that it’s crawled before, it could very well attempt to crawl it again. If 404 errors are occurring at scale, this is a massive waste of a finite and limited resource: crawl budget. Crawl budget represents the average number of pages Googlebot will crawl over a given time period and the depth into the site it will crawl into when it does. All sites have finite crawl budgets, although the more domain authority a website has, the larger its crawl budget will be. Still, for sites both big and small, you want Googlebot spending most of its time crawling real pages and not stumbling into dead ends.

Where

So, now that we’ve established why cleaning up 404 errors is critical, where can we find them? We’d recommend two options that are solid choices for overall analysis and clean up and two others that can be utilized in specific use cases.

Dedicated crawling tools – Every developer and digital marketer should have a reliable crawling tool on standby. Screaming Frog is among the most well-known but Sitebulb is also growing in popularity. Crawling tools function independently of a web-browser, letting users control when they crawl a site, where, how fast, and can even “mimic” different user agents, like Googlebot. User interfaces vary in their friendliness, but all have spreadsheet exports that can be used for 404 reporting.

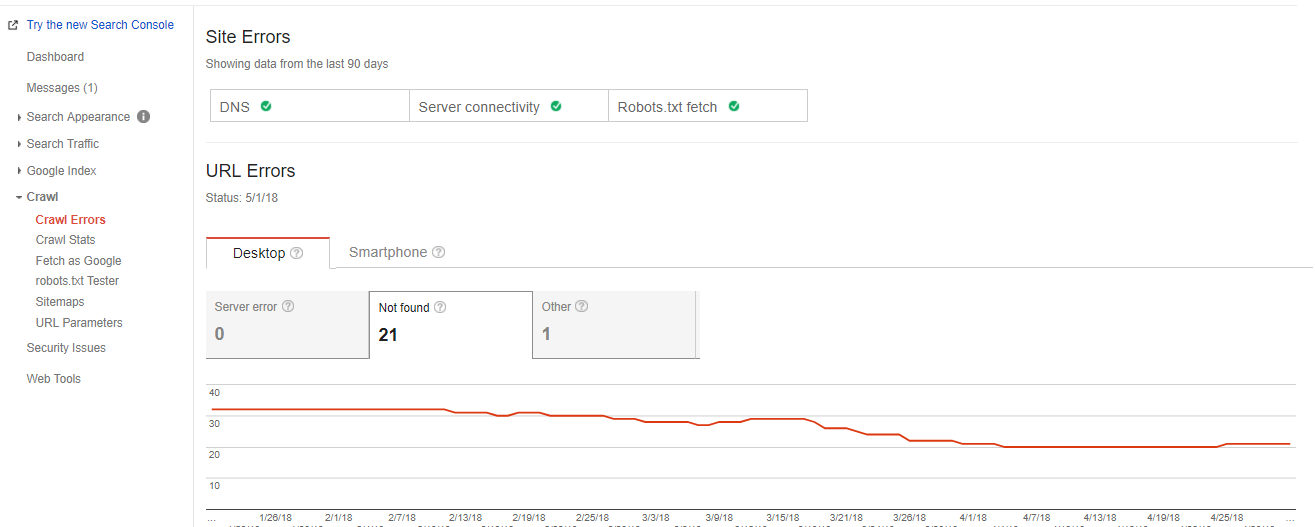

Google Search Console and/or Analytics – Google Search Console reports on 404s under Errors, which can be found in the main Crawl section of their left-hand menu:

If you use a dedicated plugin like Yoast SEO’s MonsterInsights , you can also easily find and analyze 404 errors right in the Content Drilldown section of Google Analytics.

Server logs – This source is a little more cumbersome and should only be used when there’s a very pervasive issue or neither of the previous sources can be leveraged. But, servers do maintain dedicated logs that a developer or development team should be able to parse into data that can be exported into a spreadsheet. However, server logs can also only reveal 404 errors for page requests that did occur, it won’t show 404 pages that haven’t been requested.

Dedicated site subscriptions – There are now a variety of digital marketing subscription-based services that include basic “site health” monitoring, including 404 reporting. Moz is perhaps the most well known in that space, but there are others like Ahrefs Broken Link Checker and SEMRush’s Site Audit that report on 404s as well.

How

So, you’ve used one or two of the sources identified above and we have a list of 404 errors. Now what? How you we fix them? Here’s a consistent ten-step process you can use.

Step One:

Find your 404 errors (see Where) and compile them in one master table

Step Two:

Identify, per 404 error, whether or not a page “should” still exist or not. Check the

Wayback Machine

if necessary for historical reference.

Step Three:

If the page should exist, then determine what happened. Did the page get removed altogether? Did the page move somewhere else, but the older page was never redirected properly? Was there simply a URL input error when a link was created?

Step Four:

If the page should exist and is down, use the Wayback machine to find the most recent copy of the page and republish it.

Step Five:

If the page just moved URLs, update the internal link so it points to the page users should be going to.

Step Six:

If the page should not exist or never existed, then determine why it’s being crawled or found. Is it from an internal link or an external link from another source?

Step Seven:

If it’s internal link, find any existing internal links and update them or remove them entirely.

Step Eight:

If it’s an external link to a page that never existed and should not exist, you can ignore it or if it’s a recurring page or page type, consider using 410s here to signal to Google this page is permanently gone.

Step Nine:

If the page shouldn’t exist anymore but it did exist at one time, consider republishing the URL and immediately redirecting it to the homepage or the most relevant category level page.

Step Ten:

Re-crawl the website and confirm the 404s have been resolved.

When

We have tools and processes for cleaning up 404s, that’s great! But when should we stop to do 404 clean up? Here’s the 4 most common times you want to review a site for 404 errors and take action:

- Anytime there’s been a site or information architecture change

- Anytime the CMS has been changed or significantly updated

- Anytime the site migrates to a new domain or a different domain migrates into this site

- Once annually, regardless of whether any of the 3 events above have occurred

Who

The last "W" here would, of course, be the "Who". Who should be responsible for 404 cleanups? We’d advocate for a team effort, but the individuals most commonly tasked with reviewing 404 errors are (usually) SEO professionals and web development teams. Still, even PPC, email and social media specialists should be aware of the issues 404 errors can cause. If you are investing in a digital marketing channel, you want to maximize your ROI and 404s can threaten that. No one wants to tweet or email blast a link that goes nowhere, nor is it worthwhile to spend money on ad clicks for dead-end links that only result in bounced site sessions. Whatever the size and arrangement of a digital marketing team, 404s require a periodic “all hands on deck” approach.

So, even if National Clean Up Your Room Day has passed, you can still work on you 404 spring cleaning. Once you pack up those winter clothes and organize your closets, do your potential site visitors and customers a big favor and clean up the 404s that are degrading your site. You won’t be sorry!